“Responsible AI helps achieve fairness, even though biases are baked into the data; gain trust, although transparency and explainability methods are evolving; and ensure regulatory compliance, while grappling with AI’s probabilistic nature.”

- Svetlana Sicular, Research VP at Gartner

In their recent

article for Harvard Business Review, David De Cremer and Garry Kasparov described AI-based machines as “fast, more accurate, and consistently rational,” noting, however, that present-day AI innovations often lack intuition, emotional intelligence, and cultural sensitivity.

While end-to-end automation and subsequent workforce reductions are not yet among the artificial intelligence trends for 2022, governments will need to address the moral implications of artificial intelligence adoption in their national AI strategies, ensuring positive automation outcomes for all parties involved.

When it comes to business AI, companies should focus on creating intelligent systems that

explain the rationale behind their decisions and

do not discriminate against people based on their ethnicity, age, gender, religion, or place of residence. Additionally, stakeholders need to clearly communicate automation benefits to get employees on board and encourage them to participate in AI deployment and training.

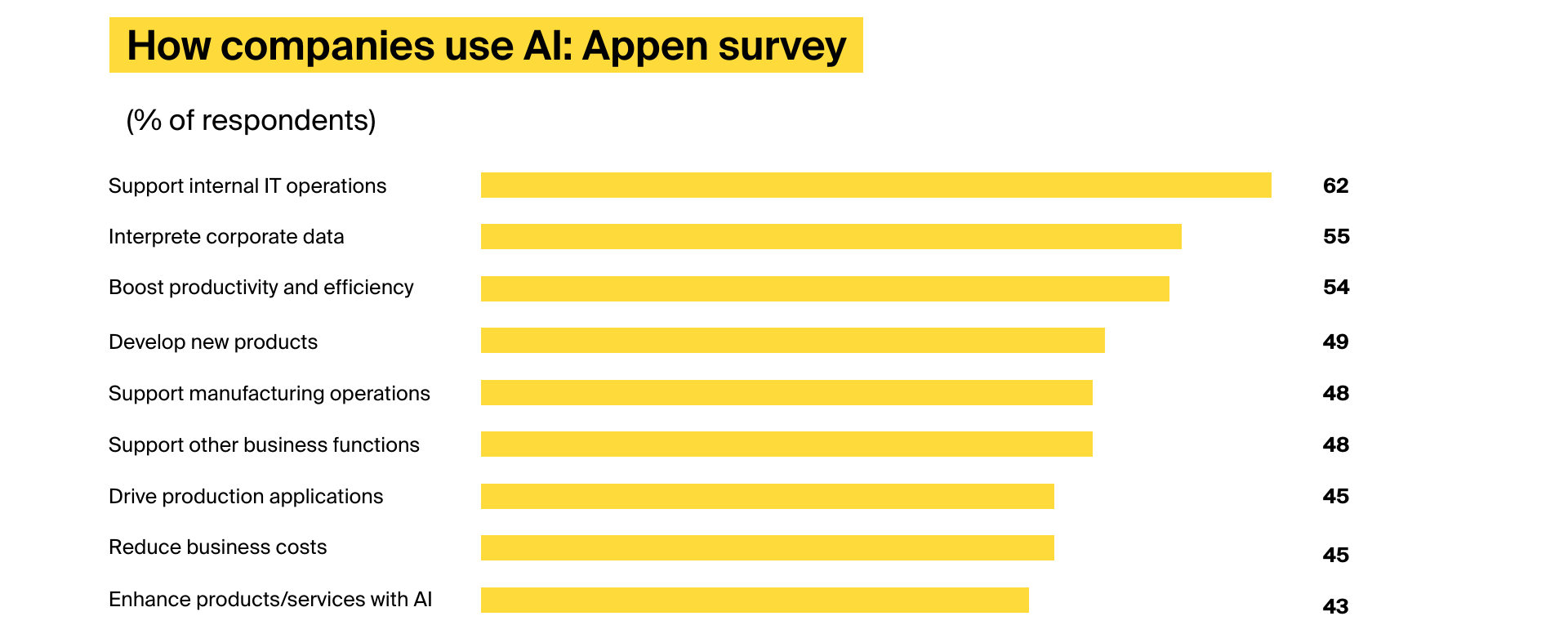

These numbers indicate that ethical, responsible artificial intelligence usage will be one of the defining AI trends for the upcoming year: